Abstract

Many videos contain flickering artifacts; common causes of flicker include video processing algorithms, video generation algorithms, and capturing videos under specific situations. Prior work usually requires specific guidance such as the flickering frequency, manual annotations, or extra consistent videos to remove the flicker. In this work, we propose a general flicker removal framework that only receives a single flickering video as input without additional guidance. Since it is blind to a specific flickering type or guidance, we name this ``blind deflickering.'' The core of our approach is utilizing the neural atlas in cooperation with a neural filtering strategy. The neural atlas is a unified representation for all frames in a video that provides temporal consistency guidance but is flawed in many cases. To this end, a neural network is trained to mimic a filter to learn the consistent features (e.g., color, brightness) and avoid introducing the artifacts in the atlas. To validate our method, we construct a dataset that contains diverse real-world flickering videos. Extensive experiments show that our method achieves satisfying deflickering performance and even outperforms baselines that use extra guidance on a public benchmark.

Blind deflickering results

(1) Unprocessed flickering videos

Unprocessed videos can contain flicker due to various reasons. For example, the brightness of old movies can be very unstable since some old cameras cannot set the exposure time of each frame to be the same with low-quality hardware. Besides, indoor lighting changes with a certain frequency and high-speed cameras can capture the rapid changes of indoor lighting.

(2) Generated flickering videos

The videos obtained by video generation methods might be temporal inconsistent. Our approach can be used to improve the temporal consistency of video generation methods (e.g., Make-a-Video and MagicVideo) by removing flickering artifacts.

(3) Processed flickering videos

A temporal consistent unprocessed video might suffer from flickering artifacts after being processed by algorithms. For example, a colorization algorithm can introduce flickers when it is applied to videos, as discussed in Deep Video Prior. Our approach can improve the temporal consistency for videos processed by different processing algorithms.

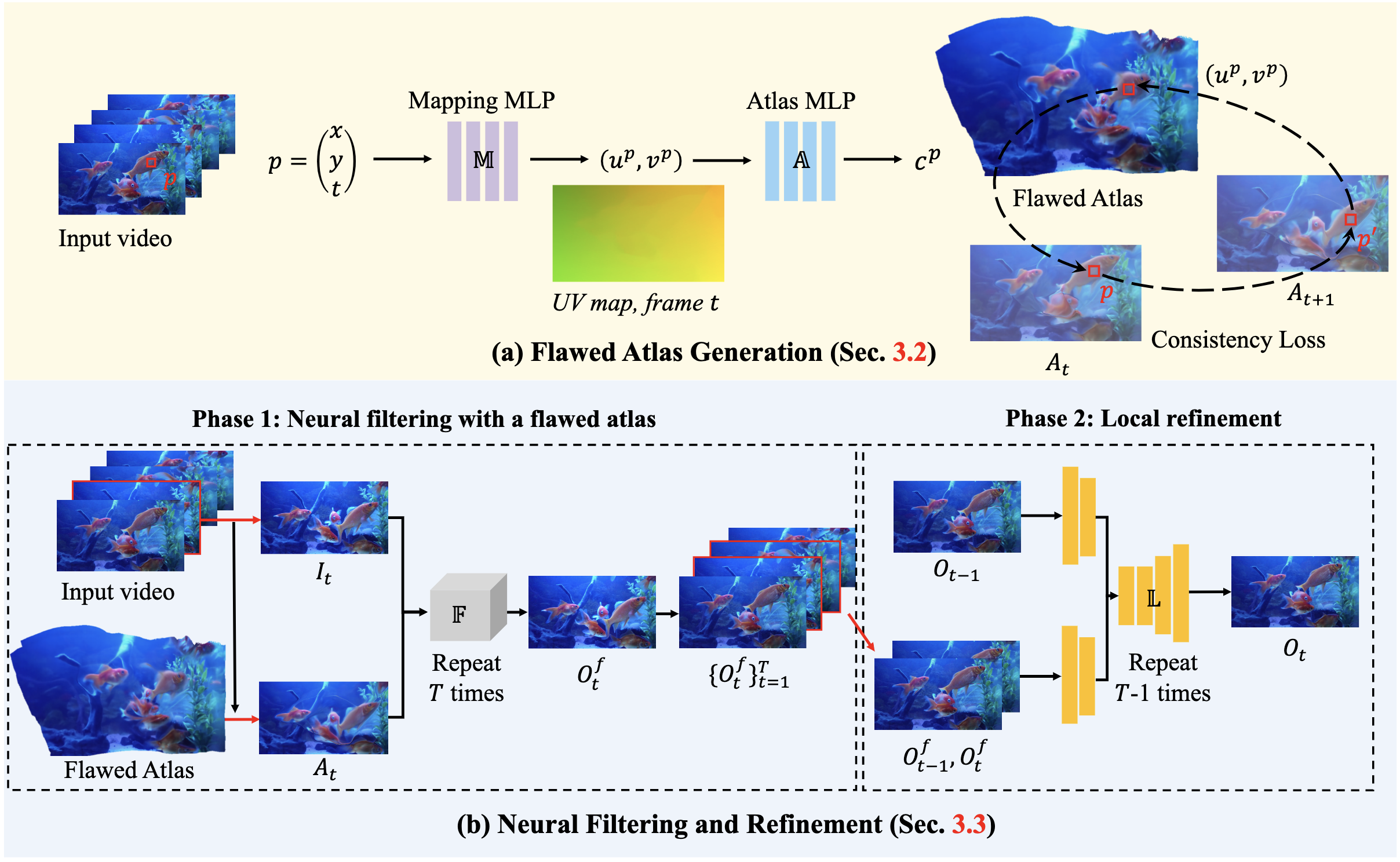

Method

We first generate an atlas as a unified representation of the whole video, providing consistent guidance for deflickering. Since the atlas is flawed, we then propose a neural filtering strategy to filter the flaws.

BibTeX

@InProceedings{Lei_2023_CVPR,

author = {Lei, Chenyang and Ren, Xuanchi and Zhang, Zhaoxiang and Chen, Qifeng},

title = {Blind Video Deflickering by Neural Filtering with a Flawed Atlas},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2023},

}